Libvirt overwrites the existing iptables rules

From WBITT's Cooker!

m (→The test setup) |

m (→The test setup) |

||

| Line 66: | Line 66: | ||

The diagram below, shows the setup described above. | The diagram below, shows the setup described above. | ||

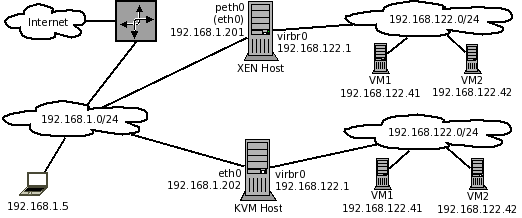

| - | [[File:Libvirt-iptables-problem-xen-kvm-setup-1.png|frame|none|alt=|Figure | + | [[File:Libvirt-iptables-problem-xen-kvm-setup-1.png|frame|none|alt=|Figure 4: Our lab setup, to evaluate iptables problems with XEN and KVM]] |

Revision as of 11:22, 13 February 2011

NOTE: This document may contain text, which is horribly wrong. This page is just my scratch pad for my personal notes, on this beast. So until I get it right, you are advised not to read anything in this document. It may shatter your existing iptables/virtualization concepts.

Please send your thoughts/comments to: kamran at wbitt dot com.

Introduction

Traditional way to host any service (web, mail, db, etc), was to acquire separate physical servers and install necessary software on them. However, the world realized that most of the servers are idling around 5% of CPU usage. (Mostly web servers). To avoid wastage of resources, the smart people introduced virtualization, and everyone jumped at it. It turned out that by introducing virtualization to save resources, the world was also solving another problem in parallel. The security problem. By virtualization, everyone (if they want to), can separate various service domains. Such as a DB server running as a VM, a web server running separately as a VM, and a mail server running as a separate VM; all running on a single physical machine. This way, if a cracker would gain access to one VM, the other service domains would remain safe. Thus reducing the level and severity of service outage.

Note: The word "domain" in this text has nothing to do with the "domain/workgroup" concept as used by Microsoft.

XEN and KVM virtualization are the leading virtualization technologies of choice. They provides three models, to setup networking on the physical host.

- Shared Physical Network Device (xenbr0 in XEN) (br0 in KVM)

- NAT based Virtual Network (virbr0)

- Routed Network

In this text, we would focus on NAT based virtual networks (virbr0). We would also be using the terms XEN and KVM interchangeably, because we are using CENTOS to analyse the behaviour of virtual networks; and both XEN and KVM in our case are available by default on our CENTOS platform. On CENTOS, they both use the same virtualization API (libvirt) to manage the VMs, even though the underlying technology of both are different. XEN is paravirtualization technology, and KVM is hardware-assisted virtualization technology.

For service hosting purposes, it is best to use the shared device model. In this model, all VMs, share the same physical network card of the host, as well as the IP scheme, to which, the physical network card of the physical host is connected to. This would mean, of-course to have more available IPs of the same network scheme, so they can be assigned to the VMs. In this way, all VMs appear on the network in the same way, as if they were just another physical server. This is the easiest way to connect your VMs to your infrastructure, and make them accessible. (We have labelled it as Rich-Man's setup, in the solutions section below).

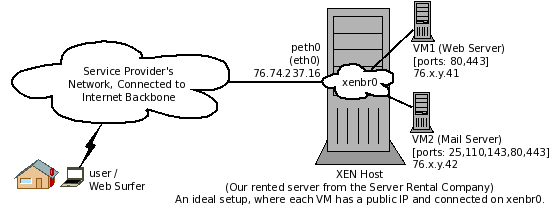

Below is an example of such situation, where a Web Server and a Mail Server runs as virtual machines, in a publicly accessible server (XEN), rented from a server rental company, which facilitates the use of bridged virtual networks.

At times there are requirements (due to security reasons), or restrictions/limitations (from the infrastructure side); thus shared device mode cannot be used. In that case, we have to use the NAT device/model. Few cases, when you must use the NAT model, are:

- When your servers are on the public network, such as in a public data center, and you have a limited number of public IPs to use for your servers. You may have to pay extra (along with submitting a justification), for extra public IPs; and you don't want to.

- When your service provider has an infrastructure, which was not built with virtualization in mind, during its design. Such infrastructures have restricted way of billing the servers, services or traffic, normally restricted to the MAC address of the physical network card of your servers.

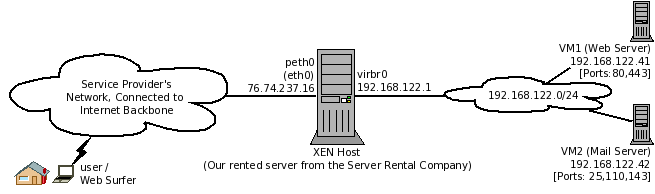

NAT model has a long list of its restrictions, limitations and problems. Most notably, the way these VMs should be accessed is through a single visible / public IP of the physical host they are hosted on.

The Problem

The problem is faced, when a virtual machine (on the private network) needs to be accessed from the outside-network, instead of a virtual machine accessing the outside-network. [Note the difference, here]. The virtual machine in discussion is on a (non-routeable) private network, inside a XEN or KVM host, connected to virbr0 interface of the physical host. This is the type of setup, which is used in situations, where you have limitations/restrictions connecting your VMs directly to the outside-network. ServerBeach, a reputed server-rental company / hosting provider, is one example.

Note: The libvirtd service (libvirt layer) provides/ sets up the private network (virbr0) on all RedHat based operating systems, such as RHEL, Fedora, CENTOS, etc.

In such scenario, naturally, the administrator of the physical host, would create certain forwarding (or DNAT) rules, to allow the traffic coming from the outside, to be redirected to a VM. While doing so, it is observed that whenever the services libvirtd or xend, are restarted, or the physical host is restarted, any iptables rules written by the administrator are over-written/modified (by some un-known process). For long, XEN has been (incorrectly) accused for doing so.

In this text, we will explain that it is not XEN, which is over-writing/modifying the iptables rules. It is actually "libvirt" which is doing so. And so far, by the time of this writing, there is no solution for it. It is a known bug and still in the OPEN/ASSIGNED state at redhat and fedora bugzilla websites. (https://bugzilla.redhat.com/show_bug.cgi?id=227011)

The diagram below is a typical lab setup. This will help us to explain, reproduce and analyse the problem. Note, that in this diagram, the network 192.168.1.0/24 "acts" as public network.

Objective / goal of this document

The objective of this document is to identify/clarify the following:

- What are these specific iptable rules?

- Why do we care? and, When should we care?

- Does it matter if we lose these rules?

- Does it matter when we have our virtual machines on a bridged interface, connecting directly to our physical LAN, xenbr0 or br0?

- Does it matter when we have our virtual machines connected only on the private network inside the physical host, virbr0?

- How do we circumvent any problems related to this scenario?

The test setup

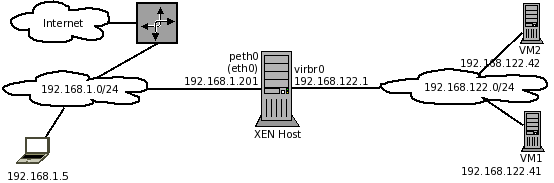

In our test setup, we have two Dell Optiplex PCs, and a laptop to access them, and their VMs, all connected to a switched network. The network is also connected to internet, through DSL. Both Dell PCs have CENTOS 5.5 x86_64, installed on them, and were updated using the "yum update" command. One of them is XEN host, and the other is KVM host.

- XENhost (192.168.1.201) [CENTOS 5.5 64bit]

- KVMhost (192.168.1.202) [CENTOS 5.5 64bit]

- Laptop (192.168.1.5) [Fedora 14. OS on the client machine is irrelevant to the discussion]

Note: Though the IPs from 192.168.1.0/24 network are actually non-routable private-IPs, still, for the sake of our example/test setup, we will use the term "Public IP" for them, most of the time, in the text below. The IPs from 192.168.122.0/24 network will be considered private.

The diagram below, shows the setup described above.

The version numbers of the key packages, with the default CENTOS 5.5 installation are:

- kernel-xen-2.6.18-194.el5.x86_64

- xen-3.0.3-105.el5.x86_64

- xen-libs-3.0.3-105.el5.x86_64

- libvirt-0.6.3-33.el5.x86_64

- libvirt-python-0.6.3-33.el5.x86_64

- iptables-1.3.5-5.3.el5_4.1.x86_64

The version numbers of the key packages, after the CENTOS 5.5 installation was updated, using "yum update":

- kernel-xen-2.6.18-194.32.1.el5.x86_64

- xen-3.0.3-105.el5_5.5.x86_64

- xen-libs-3.0.3-105.el5_5.5.x86_64

- libvirt-0.6.3-33.el5_5.3.x86_64

- libvirt-python-0.6.3-33.el5_5.3.x86_64

- iptables-1.3.5-5.3.el5_4.1.x86_64.rpm [Remained at previous version number.]

Problem analysis

Example/simple firewall/iptables rules on our XEN server

To analyse the problem, first we have created a very simple iptables rules-set. The LOG rules are added to all chains of nat and filter tables. i.e. INPUT, FORWARD and OUTPUT chains of the filter table, and PREROUTING, POSTROUTING and OUTPUT chains of nat table. These rules do nothing, except logging any traffic that passes through that particular chain. There is no advantage of doing so (logging the traffic) in our scenario. However, if later on, these rules disappear, or get changed, it will prove our point, that it is actually libvirt, which is messing with them.

Note: There is an OUTPUT chain in filter table, and there is an OUTPUT chain in the nat table. They are not the same. They are two different chains.

Here is our rules set. Notice that we also have a rule to block any incoming SMTP request to this server, solely for the sake of example.

[root@xenhost ~]# iptables -L Chain INPUT (policy ACCEPT) target prot opt source destination LOG all -- anywhere anywhere LOG level warning REJECT tcp -- anywhere anywhere tcp dpt:smtp reject-with icmp-port-unreachable Chain FORWARD (policy ACCEPT) target prot opt source destination LOG all -- anywhere anywhere LOG level warning Chain OUTPUT (policy ACCEPT) target prot opt source destination LOG all -- anywhere anywhere LOG level warning [root@xenhost ~]# [root@xenhost ~]# iptables -L -t nat Chain PREROUTING (policy ACCEPT) target prot opt source destination LOG all -- anywhere anywhere LOG level warning Chain POSTROUTING (policy ACCEPT) target prot opt source destination LOG all -- anywhere anywhere LOG level warning Chain OUTPUT (policy ACCEPT) target prot opt source destination LOG all -- anywhere anywhere LOG level warning [root@xenhost ~]#

These rules are actually coming from the file: /etc/sysconfig/iptables. The iptables service reads this file and applies these rules, when it is started.

[root@xenhost ~]# cat /etc/sysconfig/iptables *filter :INPUT ACCEPT [226:16512] :FORWARD ACCEPT [0:0] :OUTPUT ACCEPT [125:15872] -A INPUT -i eth0 -j LOG -A INPUT -i eth0 -p tcp -m tcp --dport 25 -j REJECT --reject-with icmp-port-unreachable -A FORWARD -j LOG -A OUTPUT -o eth0 -j LOG COMMIT *nat :PREROUTING ACCEPT [2:64] :POSTROUTING ACCEPT [2:148] :OUTPUT ACCEPT [2:148] -A PREROUTING -i eth0 -j LOG -A POSTROUTING -o eth0 -j LOG -A OUTPUT -o eth0 -j LOG COMMIT [root@xenhost ~]#

It is important to note, that on a server which is placed-on / connected-to Internet, normally controls only/mainly the incoming traffic. An internet web server, for example, is normally protected by a host-firewall. i.e. iptables rules configured on the server itself, to restrict/control traffic arriving on it's INPUT chain. It is important to note that since the server administrator "trusts" the server itself, it does not have to control the outgoing traffic, which is the OUTPUT chain. Thus no rules are configured on the OUTPUT chain. It is also important to note, that since the server is the end-point of any two way internet communication, there is never a need to configure any rules on the FORWARD chain, nor the PREROUTING, POSTROUTING and OUTPUT chains in the nat table.

In case of a XEN or KVM host, we would be having one or more virtual machines, behind the said server. The traffic to/from the VMs will have to pass through the FORWARD chain on the physical host. Thus FORWARD chain has significant importance in this case, and needs to be both protected against abuse, and at the same time, facilitate traffic between VMs and the physical host (and the outside world). Since the VMs in our particular case are on a private network, inside the physical host, we would certainly need PREROUTING rules to redirect (DNAT) any traffic towards the VMs. Such as traffic coming in on port 80 on the public IP of this physical host, may need to be forwarded to VM1, which can be a web server. Also SMTP, POP and IMAP may need to be forwarded to VM2, which can be a mail server.

The POSTROUTING chain also has a significant role in our case, because any traffic coming out of the VMs, going towards the internet, will need to be translated to the IP of the public interface of the physical host. We normally need SNAT or MASQUERADE rules here.

We would still follow the principle of not hosting any un-necessary services on the physical host itself, such as SMTP, HTTP, etc etc. However, we would still need to protect this physical host from any malicious traffic and attacks directly targeted for it. Such as various forms of ICMP attacks.

Note, that we do not intend to protect the virtual machines hosted on this server, using the iptables rules on the physical host. That is, (a) very bad practice, as it complicates the firewall rules on the physical host, for each time a VM is added/removed/started/shutdown, (b) it over-loads the server to perform in the firewall role, in addition to managing the VMs inside it. The best practice is to let the physical host do only the VM management, and protect the virtual machines using the host firewalls on the VMs themselves. If resources on the physical host permit, then an additional VM can be created working solely as a firewall for all the VMs. However, this is beyond the scope of this document.

The default iptables rules on a XEN physical host

For the time being, for the sake of ease of understanding, we have stopped the iptables service. This will help us observe, what iptables rules are set up, when a XEN or KVM host boots up.

Below are the iptables rules found on a XEN host. Note that there is no VM running on the host at this time.

[root@xenhost ~]# iptables -L Chain INPUT (policy ACCEPT) target prot opt source destination ACCEPT udp -- anywhere anywhere udp dpt:domain ACCEPT tcp -- anywhere anywhere tcp dpt:domain ACCEPT udp -- anywhere anywhere udp dpt:bootps ACCEPT tcp -- anywhere anywhere tcp dpt:bootps Chain FORWARD (policy ACCEPT) target prot opt source destination ACCEPT all -- anywhere 192.168.122.0/24 state RELATED,ESTABLISHED ACCEPT all -- 192.168.122.0/24 anywhere ACCEPT all -- anywhere anywhere REJECT all -- anywhere anywhere reject-with icmp-port-unreachable REJECT all -- anywhere anywhere reject-with icmp-port-unreachable Chain OUTPUT (policy ACCEPT) target prot opt source destination [root@xenhost ~]# [root@xenhost ~]# iptables -L -t nat Chain PREROUTING (policy ACCEPT) target prot opt source destination Chain POSTROUTING (policy ACCEPT) target prot opt source destination MASQUERADE all -- 192.168.122.0/24 !192.168.122.0/24 Chain OUTPUT (policy ACCEPT) target prot opt source destination [root@xenhost ~]#

Save these rules, so we can study them in a different format, as well as restore them when there is a need to:

[root@xenhost ~]# iptables-save > /root/xenhost-iptables-default.txt

Have a look at this file to understand the rules better:

[root@xenhost ~]# cat /root/xenhost-iptables-default.txt *nat :PREROUTING ACCEPT [5:180] :POSTROUTING ACCEPT [6:428] :OUTPUT ACCEPT [6:428] -A POSTROUTING -s 192.168.122.0/255.255.255.0 -d ! 192.168.122.0/255.255.255.0 -j MASQUERADE COMMIT *filter :INPUT ACCEPT [168:13693] :FORWARD ACCEPT [0:0] :OUTPUT ACCEPT [114:13252] -A INPUT -i virbr0 -p udp -m udp --dport 53 -j ACCEPT -A INPUT -i virbr0 -p tcp -m tcp --dport 53 -j ACCEPT -A INPUT -i virbr0 -p udp -m udp --dport 67 -j ACCEPT -A INPUT -i virbr0 -p tcp -m tcp --dport 67 -j ACCEPT -A FORWARD -d 192.168.122.0/255.255.255.0 -o virbr0 -m state --state RELATED,ESTABLISHED -j ACCEPT -A FORWARD -s 192.168.122.0/255.255.255.0 -i virbr0 -j ACCEPT -A FORWARD -i virbr0 -o virbr0 -j ACCEPT -A FORWARD -o virbr0 -j REJECT --reject-with icmp-port-unreachable -A FORWARD -i virbr0 -j REJECT --reject-with icmp-port-unreachable COMMIT [root@xenhost ~]#

The default iptables rules on a KVM physical host

Here is a default iptables rule-set from a KVM based CentOS 5.5 physical host. The default firewall (iptables service) was stopped when libvirtd service was started, at system boot. Also note that no VM is running on the KVM host at the moment.

[root@kvmhost ~]# iptables -L Chain INPUT (policy ACCEPT) target prot opt source destination ACCEPT udp -- anywhere anywhere udp dpt:domain ACCEPT tcp -- anywhere anywhere tcp dpt:domain ACCEPT udp -- anywhere anywhere udp dpt:bootps ACCEPT tcp -- anywhere anywhere tcp dpt:bootps Chain FORWARD (policy ACCEPT) target prot opt source destination ACCEPT all -- anywhere 192.168.122.0/24 state RELATED,ESTABLISHED ACCEPT all -- 192.168.122.0/24 anywhere ACCEPT all -- anywhere anywhere REJECT all -- anywhere anywhere reject-with icmp-port-unreachable REJECT all -- anywhere anywhere reject-with icmp-port-unreachable Chain OUTPUT (policy ACCEPT) target prot opt source destination [root@kvmhost ~]# [root@kvmhost ~]# iptables -L -t nat Chain PREROUTING (policy ACCEPT) target prot opt source destination Chain POSTROUTING (policy ACCEPT) target prot opt source destination MASQUERADE all -- 192.168.122.0/24 !192.168.122.0/24 Chain OUTPUT (policy ACCEPT) target prot opt source destination [root@kvmhost ~]#

Let's save these rules in a file, so we can study them in a different format, as well as, load the defaults any time we need to.

[root@kvmhost ~]# iptables-save > /root/kvmhost-iptables.default.txt

Lets look at this file for easier understanding of these rules:

[root@kvmhost ~]# cat /root/kvmhost-iptables.default.txt *nat :PREROUTING ACCEPT [661:21364] :POSTROUTING ACCEPT [58069:3670258] :OUTPUT ACCEPT [58069:3670258] -A POSTROUTING -s 192.168.122.0/24 ! -d 192.168.122.0/24 -j MASQUERADE COMMIT *filter :INPUT ACCEPT [1212620:674141323] :FORWARD ACCEPT [0:0] :OUTPUT ACCEPT [1518464:780474182] -A INPUT -i virbr0 -p udp -m udp --dport 53 -j ACCEPT -A INPUT -i virbr0 -p tcp -m tcp --dport 53 -j ACCEPT -A INPUT -i virbr0 -p udp -m udp --dport 67 -j ACCEPT -A INPUT -i virbr0 -p tcp -m tcp --dport 67 -j ACCEPT -A FORWARD -d 192.168.122.0/24 -o virbr0 -m state --state RELATED,ESTABLISHED -j ACCEPT -A FORWARD -s 192.168.122.0/24 -i virbr0 -j ACCEPT -A FORWARD -i virbr0 -o virbr0 -j ACCEPT -A FORWARD -o virbr0 -j REJECT --reject-with icmp-port-unreachable -A FORWARD -i virbr0 -j REJECT --reject-with icmp-port-unreachable COMMIT [root@kvmhost ~]#

Important note about the default iptables service

It is important to note, that in the two examples above, iptables service was disabled only to avoid possible confusion, that the iptables rules shown may have been coming from the iptables service. Otherwise, we do not recommend that you disable your iptables service. If the default iptables rules setup by the iptables service are not suitable for your particular case, then you can adjust them as per your needs. The bottom line is, that you must have some level of protection against unwanted traffic.

Another point to be noted here is that, ideally, on a physical host, you should not be running any publicly accessible service (a.k.a. public serving service) other than ssh, in your production environments. It means that you should not use your physical host to serve out web pages/websites , or run mail services, or FTP, etc. You do not want that a cracker exploits any of the extra services' vulnerabilities on your physical host, gain root access, and in-turn gain access to "all" virtual machines hosted on this physical host. Even SSH should be used with key based authentication only. And further restricted to be accessed only from the IP addresses, of the locations you manage your physical hosts from. If possible, you should also consider running your physical host and the VMs, on top of SELinux.

Understanding the rules file created by iptables-save command

Many people consider the output of iptables-save to be very cryptic. Actually, it is not that cryptic at all! Here is a brief explanation of the iptables rules file, created by iptables-save command. We will use the default iptables rules created by the libvirtd service, saved in a file created at our XEN host.

Note 1: A small virtual machine was created inside XEN host and was started before executing the commands shown below.

Note 2: Notice that virbr0 (activated through libvirtd) is identical on both KVM and XEN hosts. And since we are here to discuss libvirtd related problems, it is ok to use example from either KVM host or XEN host.

[root@xenhost ~]# iptables-save > /root/iptables-vm-running.txt [root@xenhost ~]# cat /root/iptables-vm-running.txt *nat :PREROUTING ACCEPT [34:2932] :POSTROUTING ACCEPT [18:1292] :OUTPUT ACCEPT [18:1292] -A POSTROUTING -s 192.168.122.0/255.255.255.0 -d ! 192.168.122.0/255.255.255.0 -j MASQUERADE COMMIT *filter :INPUT ACCEPT [549:40513] :FORWARD ACCEPT [0:0] :OUTPUT ACCEPT [364:41916] -A INPUT -i virbr0 -p udp -m udp --dport 53 -j ACCEPT -A INPUT -i virbr0 -p tcp -m tcp --dport 53 -j ACCEPT -A INPUT -i virbr0 -p udp -m udp --dport 67 -j ACCEPT -A INPUT -i virbr0 -p tcp -m tcp --dport 67 -j ACCEPT -A FORWARD -d 192.168.122.0/255.255.255.0 -o virbr0 -m state --state RELATED,ESTABLISHED -j ACCEPT -A FORWARD -s 192.168.122.0/255.255.255.0 -i virbr0 -j ACCEPT -A FORWARD -i virbr0 -o virbr0 -j ACCEPT -A FORWARD -o virbr0 -j REJECT --reject-with icmp-port-unreachable -A FORWARD -i virbr0 -j REJECT --reject-with icmp-port-unreachable -A FORWARD -m physdev --physdev-in vif1.0 -j ACCEPT COMMIT

- Lines starting with * (asterisk) are the iptables "tables". Such as "nat" table, and "filter" table, as shown in the output above. Each table has "chains" defined in them.

- Lines starting with : (colon) are the name of chains. These lines contain following four pieces of information about a chain. ":ChainName POLICY [Packets:Bytes]"

- The name of the chain right next to the starting colon. e.g. "INPUT" , or "PREROUTING"

- The default policy of the chain. e.g. ACCEPT or DROP or REJECT. 99% of time, you will see ACCEPT here.

- The two values inside the square brackets are number of packets passed through this chain, so far, as well as the number of bytes. e.g. [364:41916] means 364 packets "or" 41916 bytes, have passed through this chain till this point in time.

- The lines starting with a - (hyphen/minus sign) are the actual rules, which you put in here. "-A" would mean "Add" the rule. "-I" (eye) would mean "Insert" the rule.

As you notice, these rules are no different than the standard rules you type on the command line, or in any shell script. The limitation of this style of writing rules (as shown here) is, that you cannot use loops and conditions, as you would normally do in a shell script.

More details on these rules

For this explanation, the following ASCII version of Figure 2, should be helpful.

+--[VM1]

|

[LAN 192.168.1.0/24]--(eth0:192.168.1.201)[PhysicalHost:DNS+DHCP+NAT](virbr0:192.168.122.1)+--[VM2]

| |

[Laptop 192.168.1.5] +--[VM3]

Continuing with the same example, we move to explain the iptables rules, created by the libvirtd daemon. I have put line numbers myself, in the beginning of each line, in the output below. (You can use cat -n file, or cat -b file, to include line numbers).

[root@xenhost ~]# cat /root/iptables-vm-running.txt 01) *nat 02) :PREROUTING ACCEPT [34:2932] 03) :POSTROUTING ACCEPT [18:1292] 04) :OUTPUT ACCEPT [18:1292] 05) -A POSTROUTING -s 192.168.122.0/255.255.255.0 -d ! 192.168.122.0/255.255.255.0 -j MASQUERADE 06) COMMIT 07) *filter 08) :INPUT ACCEPT [549:40513] 09) :FORWARD ACCEPT [0:0] 10) :OUTPUT ACCEPT [364:41916] 11) -A INPUT -i virbr0 -p udp -m udp --dport 53 -j ACCEPT 12) -A INPUT -i virbr0 -p tcp -m tcp --dport 53 -j ACCEPT 13) -A INPUT -i virbr0 -p udp -m udp --dport 67 -j ACCEPT 14) -A INPUT -i virbr0 -p tcp -m tcp --dport 67 -j ACCEPT 15) -A FORWARD -d 192.168.122.0/255.255.255.0 -o virbr0 -m state --state RELATED,ESTABLISHED -j ACCEPT 16) -A FORWARD -s 192.168.122.0/255.255.255.0 -i virbr0 -j ACCEPT 17) -A FORWARD -i virbr0 -o virbr0 -j ACCEPT 18) -A FORWARD -o virbr0 -j REJECT --reject-with icmp-port-unreachable 19) -A FORWARD -i virbr0 -j REJECT --reject-with icmp-port-unreachable 20) -A FORWARD -m physdev --physdev-in vif1.0 -j ACCEPT 21) COMMIT

What's happening here is the following:

- Line 05 defines a rule in the POSTROUTING chain in the "nat" table. It says that any traffic originating from 192.168.122.0/255.255.255.0 network, and trying to reach any other network but itself (-d ! 192.168.122.0/255.255.255.0) should be MASQUERADEd. In simple words, if there is any traffic coming from a VM (because only a VM can be on this 192.168.122.0/24 network), trying to go out from the physical LAN interface (eth0) of the physical host , must be masqueraded. This makes sense, as we don't know what will be the IP of the physical interface of the physical host. This rule facilitates the traffic to go out.

- Note: This is a single MASQUERADE rule in the default (non-updated) CENTOS 5.5 installation. If you update your installation using "yum update", you will see libvirtd adding three MASQUERADE rules here, instead of one. (This iptables listing shown here, was obtained from our XEN host, prior to updating it). The new three rules provide essentially the same functionality, and are listed below for reference:

-A POSTROUTING -s 192.168.122.0/255.255.255.0 -d ! 192.168.122.0/255.255.255.0 -p tcp -j MASQUERADE --to-ports 1024-65535 -A POSTROUTING -s 192.168.122.0/255.255.255.0 -d ! 192.168.122.0/255.255.255.0 -p udp -j MASQUERADE --to-ports 1024-65535 -A POSTROUTING -s 192.168.122.0/255.255.255.0 -d ! 192.168.122.0/255.255.255.0 -j MASQUERADE

- Briefly, the first two POSTROUTING rules shown above, MASQUERADE the outgoing TCP and UDP traffic to the IP of the public interface, making sure that the ports are rewritten only using the port-range between 1024 and 65535. The third rule takes care of other outgoing traffic, such as ICMP.

- Line 06 and 21: The word COMMIT indicates the end of list of rules for a particular particualr iptables "table".

- Lines 11 to 14: Virtual machines need a mechanism to get IP automatically and name resolution. DNSMASQ is the small service running on the phyical host, serving both DNS and DHCP requests coming in from the VMs. The DNSMASQ service on this physical host is not reachable over the physical LAN by any other physical host. Neither do it interferes with any DHCP service running elsewhere on the physical LAN. Lines 11 to 14 allow this incoming traffic from the VMs to arrive on the virbr0 interface of the physical host.

- Line 15: In case some traffic originated from a VM, and went out on the internet (because of line05), it will now want to reach back to the VM. For example, on the VM, you tried to pull an httpd.rpm file from a CENTOS mirror. So an HTTP request was originated from this VM, reached the CENTOS mirror (on the internet) and now the packets related to this transaction needs to be allowed back in so they can reach the VM. This requires a rule in the FORWARD chain, shown in line 15, which says that any traffic going to any VM on 192.168.122.0/255.255.255.0 network, exiting through/towards the virbr0 interface of the physical host, and is RELATED to some previous traffic "originated" from that particular VM, must be allowed. Without this, the return traffic/packets will never reach back the VM, and you will get all sorts of weird time-outs.

- Line 16: Basically if this rule does not exist, then the rule defined in line 5 will never get the traffic to MASQUERADE. Means, this line (16) says that any traffic originating from VMs on 192.168.122.0/255.255.255.0 , arriving at virbr0 interface of the physical host, trying to go across the FORWARD chain (to go elsewhere), must be ACCEPTed. Basically this is the line/rule, which transports a packet from virbr0 to eth0 (in this single direction only).

- Line 17: This is simply a multi-direction facilitator for all VMs connected to one virtual network. For example, VM1 and VM3 want to exchange some data on TCP/UDP/ICMP, their traffic would naturally traverse the virtual switch, virbr0. This line/rule allows that traffic to be ACCEPTed.

So far, we are able to send the traffic out from the VM and receive it back as well. Now what?

- Line 18: This line is read as : Any traffic coming from any source address, and going out to outgoing interface virbr0, will be rejected with a ICMP "port unreachable" message. Since we have already dealt with traffic coming in from virbr0, or any of the other VMs on the same machine, in the iptables rule before #18, this rule is for the traffic coming from "outside network" / "physical LAN". In other words, any traffic which could not satisfy the rules so far, and still interested in reaching the VMs going towards the virbr0 interface, is REJECTed. For example, you start simple web service on port 80 on VM1. If you setup a port to be forwarded from the physical interface of your physical host, to this port 80 on the VM, using DNAT, and try to reach that port, you access will be REJECTed because of this rule!

- Line 19: Any traffic coming in from any VM, through virbr0 interface and trying to go out from the physical interface of the physical host (eth0) will be REJECTed. For example, in line /rule 16 we saw that any traffic coming in from a VM on 192.168.122.0/24 network, and trying to go out the physical interface of the physical machine is allowed. True, but please note, that is only allowed, when the source IP is from the 192.168.122.0/24 network! If there is a VM on the same virtual switch/bridge (virbr0), but with different IP, (say 10.1.1.1) , and it tries to go through the physical host towards the other side, it would be denied access. This is the last iptables rule as defined by libvirt. The next rule is from XEN.

Note: Rules 18 and 19, shown here, act as a default reject-all or drop-all policy for the FORWARD chain.

- Line 20: This is interesting. First, notice that this rule is not part of the default libvirt ruleset. Instead, when a VM on XEN physical host was powered on, this rule got added to the rule-set. (Doesn't appear on KVM host). When the virtual machine is shutdown, this line gets removed. The code controlling this behavior resides in /etc/xen/scripts/vif-common.sh file.

- "PHYSDEV" is a special match module, made available in 2.6 kernels. It is used to match the bridge's physical in and out ports. Its basic usage is simple. e.g. " iptables -m physdev --physdev-in <bridge-port> -j <TARGET>" . It is used in situations where an interface may, or may not, (or may never), have an IP address. Check the iptables man page for more detail on "physdev".

- In XEN, for each virtual machine, there is a vifX.Y. A so called / virtual patch cable runs from the virtual machine to this port on the bridge. In the (XEN) example we are following, VM1 (aka domain-1, or domain with id #1), is connected to the virtual bridge on the physical host, on vif1.0 port. Here is the rule for reference.

20) -A FORWARD -m physdev --physdev-in vif1.0 -j ACCEPT

- Since these iptables rules (and rule #20 in particular) are added at the XEN (physical) host, we will read and understand the physdev-in and physdev-out, taking XEN host as a reference. Thus, physical-device-in (--physdev-in vif1.0) means that traffic coming in (received) from vif1.0 port on the bridge, towards the XEN host.

- The script /etc/xen/scripts/vif-common.sh has facility to add this rule either at the end of the current ruleset of the physical host, or, at the top of the rule-set of the physical host. This is do-able by changing the "-A" to "-I" in this script file. Here is the part of the script for reference:

. . .

. . .

function frob_iptable()

{

if [ "$command" == "online" ]

then

local c="-A"

else

local c="-D"

fi

. . .

. . .

Note: The purpose of this rule (line #20) seems to be: to make sure that whatever your previous firewall rules on the physical host, (assuming they are "sane"), the VM can always send traffic to/through the physical host. However, during our testing, this rule doesn't seem to work, with any traffic coming in from vif1.0, or going out to vif1.0. During some tests, it was made sure that the PHYSDEV rule was at the top of FORWARD chain (using the c="-I"), instead of being added to the end of the rules, to ensure any possible match. However, we could not get any traffic to get registered on this rule. In other words, no traffic matched this rule. And the counters at this rule always remained at zero.

[root@xenhost ~]# iptables -L -v

. . .

Chain FORWARD (policy ACCEPT 0 packets, 0 bytes)

pkts bytes target prot opt in out source destination

0 0 ACCEPT all -- any any anywhere anywhere PHYSDEV match --physdev-in vif1.0

25 30517 ACCEPT all -- any virbr0 anywhere 192.168.122.0/24 state RELATED,ESTABLISHED

26 1487 ACCEPT all -- virbr0 any 192.168.122.0/24 anywhere

0 0 ACCEPT all -- virbr0 virbr0 anywhere anywhere

1 60 REJECT all -- any virbr0 anywhere anywhere reject-with icmp-port-unreachable

0 0 REJECT all -- virbr0 any anywhere anywhere reject-with icmp-port-unreachable

. . .

[root@xenhost ~]#

This leads us to another possibility. XEN may be expecting the vif1.0 interface on the xenbr0. And since our VM (vm1), is connected to virbr0, instead of xenbr0, this rule is not seeing any traffic. [Needs clarification]

The /etc/xen/xend-config.sxp specifies a directive (network-script network-bridge), which means that XEN will set up network-bridge. The other options are network-nat and network-route. network-bridge directive expects a bridge (xenbr0), sharing the network device (peth0). Thus, XEN's PHYSDEV match rule, in the situation, where libvirt is providing the network-nat service (not XEN), may be totally useless/un-necessary.

Therefore, we will not bother if XEN adds the PHYSDEV match at the beginning of rules, or at the end. We will leave it to default behaviour, i.e. added at the end of rules-set.

We will see in other tests, by creating a separate VM and connecting it to xenbr0, and then check, if this particular rule gets any traffic or not.

Booting the XEN host with both iptables and libvirt services enabled

So far, we have covered all the basics of how the various iptables rules work on a XEN or KVM hsot. Now is the time to boot the XEN host with the following services enabled: iptables, libvirtd and xend.

It would be interesting to note that the default startup sequence of key services is as following:

[root@xenhost ~]# grep "chkconfig:" /etc/init.d/* | egrep "iptables|network|libvirt|xen" | sort -k4 /etc/init.d/iptables:# chkconfig: 2345 08 92 /etc/init.d/network:# chkconfig: 2345 10 90 /etc/init.d/libvirtd:# chkconfig: 345 97 03 /etc/init.d/xend:# chkconfig: 2345 98 01 /etc/init.d/xendomains:# chkconfig: 345 99 00 [root@xenhost ~]#

The text above is deciphered as:

- The iptables service is started at init sequence number 8,

- then, the network service starts at sequence number 10,

- then, some other services are started (not shown),

- then, libvirtd service starts at sequence number 97,

- then, xend service starts at sequence number 98,

- and then, xendomains service starts at sequence number 99.

Before rebooting the server, we make sure that our desired services are configured to start at both run levels 3 and 5:

[root@xenhost ~]# chkconfig --list | egrep "iptables|network|libvirt|xen" iptables 0:off 1:off 2:on 3:on 4:on 5:on 6:off libvirtd 0:off 1:off 2:off 3:on 4:on 5:on 6:off network 0:off 1:off 2:on 3:on 4:on 5:on 6:off xend 0:off 1:off 2:on 3:on 4:on 5:on 6:off xendomains 0:off 1:off 2:off 3:on 4:on 5:on 6:off [root@xenhost ~]#

Note: The only VM at the moment is is VM1, and is configured to "not" start at system boot, on this XEN host. Thus, we will not see it up, nor will we see the related PHYSDEV rule at the moment.

Before rebooting, recall that we have a simple iptables rules configured in /etc/sysconfig/iptables file, which just logs the traffic. When the system is rebooted, we will analyse, which rules got disappeared, or displaced, etc. For a quick re-cap, here is the simple iptables rules file we have created:

[root@xenhost ~]# cat /etc/sysconfig/iptables *filter :INPUT ACCEPT [226:16512] :FORWARD ACCEPT [0:0] :OUTPUT ACCEPT [125:15872] -A INPUT -i eth0 -j LOG -A INPUT -i eth0 -p tcp -m tcp --dport 25 -j REJECT --reject-with icmp-port-unreachable -A FORWARD -j LOG -A OUTPUT -o eth0 -j LOG COMMIT *nat :PREROUTING ACCEPT [2:64] :POSTROUTING ACCEPT [2:148] :OUTPUT ACCEPT [2:148] -A PREROUTING -i eth0 -j LOG -A POSTROUTING -o eth0 -j LOG -A OUTPUT -o eth0 -j LOG COMMIT [root@xenhost ~]#

And now, reboot:

[root@xenhost ~]# reboot

Post boot analysis of iptables rules on the XEN host, and Conclusion

After the system booted up, we checked the rules, and below is what we see:

[root@xenhost ~]# iptables -L Chain INPUT (policy ACCEPT) target prot opt source destination ACCEPT udp -- anywhere anywhere udp dpt:domain ACCEPT tcp -- anywhere anywhere tcp dpt:domain ACCEPT udp -- anywhere anywhere udp dpt:bootps ACCEPT tcp -- anywhere anywhere tcp dpt:bootps LOG all -- anywhere anywhere LOG level warning REJECT tcp -- anywhere anywhere tcp dpt:smtp reject-with icmp-port-unreachable Chain FORWARD (policy ACCEPT) target prot opt source destination ACCEPT all -- anywhere 192.168.122.0/24 state RELATED,ESTABLISHED ACCEPT all -- 192.168.122.0/24 anywhere ACCEPT all -- anywhere anywhere REJECT all -- anywhere anywhere reject-with icmp-port-unreachable REJECT all -- anywhere anywhere reject-with icmp-port-unreachable LOG all -- anywhere anywhere LOG level warning Chain OUTPUT (policy ACCEPT) target prot opt source destination LOG all -- anywhere anywhere LOG level warning [root@xenhost ~]# [root@xenhost ~]# iptables -L -t nat Chain PREROUTING (policy ACCEPT) target prot opt source destination LOG all -- anywhere anywhere LOG level warning Chain POSTROUTING (policy ACCEPT) target prot opt source destination MASQUERADE tcp -- 192.168.122.0/24 !192.168.122.0/24 masq ports: 1024-65535 MASQUERADE udp -- 192.168.122.0/24 !192.168.122.0/24 masq ports: 1024-65535 MASQUERADE all -- 192.168.122.0/24 !192.168.122.0/24 LOG all -- anywhere anywhere LOG level warning Chain OUTPUT (policy ACCEPT) target prot opt source destination LOG all -- anywhere anywhere LOG level warning [root@xenhost ~]#

As you would notice, the following chains are altered by libvirtd: INPUT, FORWARD and POSTROUTING. Also notice the rules we configured in /etc/sysconfig/iptables are enabled, but are "pushed down" by the iptables rules applied by libvirt. After seeing these rules, we deduce the following:

- The FORWARD chain has two reject-all type of rules introduced by libvirtd. And our innocent rules are below them. Note that in a single server scenario, we have no use of the FORWARD chain anyway. However, in case we are using XEN or KVM, and have VMs on the private network (virbr0), then the rules are restrictive. The rules added by libvirt restrict the traffic flow on the FORWARD chain, between the physical host and the VMs (on virbr0). And worst is that any rules we configured are pushed down in the FORWARD chain, below the reject-all rules inserted by libvirtd. so, even if we configure certain rules to allow NEW traffic flow from eth0 to virbr0 and allow RELATED,ESTABLISHED traffic to flow back out from virbr0 to eth0, our rules will be pushed down. This is a point of concern for us. We have to find a solution for this.

- The four rules inserted by libvirt in the (beginning of) INPUT chain, are just making sure that the DHCP and DNS traffic from the VMs have no issue reaching the physical host. The INPUT chain does not have REJECT-ALL sort of rules at the bottom of the chain, as we saw in the FORWARD chain. Our iptables rule, blocking the SMPT traffic, destined for our physical host, still exists at the bottom of the INPUT chain. This means, we can have whatever rules we want to have in the INPUT chain, for the physical host's protection. That also means, we can use the /etc/sysconfig/iptables file to configure the protection rules for the physical host, and be rest assured that they will not be affected. Good. No issues here.

- The OUTPUT chain in both nat and filter tables, has no new rules from libvirt. The OUTPUT chain is otherwise of no importance to us. Thus, no issues here.

- The POSTROUTING chain has MASQUERADE rules, which basically are the facilitators for the VMs on the private virtual network inside our physical host. The MASQUERADE rules make sure that any packets going out the outgoing interface, have their IP address replaced with the IP address of the public interface. We didn't want to have any rules configured in the POSTROUTING chain, so this is also a good thing. We don't have issues here. However, we have a small concern here. MASQUERADE is slower than SNAT. MASQUERADE queries the public interface for the IP, each time a packet wants to go outside to the internet, and then replaced the IP header of the outgoing packet with the public IP it obtained in the previous step. Public internet servers, normally have fixed public IP address. SNAT can be used instead of MASQUERADE. When SNAT is used, it is configured to always replace the IP header of the outgoing packet to a fixed IP. So the step to query the public interface is skipped, in SNAT case. Thus SNAT should be used in such scenarios. We hope to see, a configuration directive in future from libvirt, to change the MASQUERADE into SNAT, without getting involved into any tricks.

- The PREROUTING chain has no rules added by libvirt. This is simply beautiful. Because, this is where we would configure our traffic redirector (DNAT) rules (, in the /etc/sysconfig/iptables file). No issues here.

So by looking at our points above, we can conclude that we can configure certain iptables rules to manage traffic to/from the VMs, as well as protect the physical server itself from malicious traffic. The only aspect we cannot control from /etc/sysconfig/iptables file is the proper flow of NEW and RELATED/ESTABLISHED traffic between eth0 and virbr0. To control this, we need to do something manually.

Solutions: The iptables rule-set for a publicly accessible VM

We have analysed the iptables rules setup by libvirt, in depth. And we have reached to the conclusion, that there are not that many issues, if we want to access a particular VM (on the private network) of the publicly accessible physical host (XEN/KVM). To do that, we would need to configure some iptables rules of our own in the /etc/sysconfig/iptables file. There are two scenarios however. And we will deal with both.

- The Poor-Man's setup: In this setup, we have only one public IP for our server, and cannot afford to (or, don't want to) acquire another public IP for the same server. This may be suitable for situations, where you just need to separate web server and mail server, as two separate VMs on the same host. Since the port requirements of these two VMs is totally separate, we don't have the requirement to acquire two additional public IPs for two VMs. We can simply redirect traffic for ports 80 and 443 to VM1 (web server), and traffic for ports 25, 110, 143 to VM2 (mail server). However this is a limited way to manage the publicly accessible services on private VMs. What if we have two, or three or more (virtual) web servers to access from the internet, all on the same physical host? Of-course, we can forward port 80 traffic to only one VM (web server), at a time. For other webservers, we have to run their web services on ports such as 81, and 82, etc. Even if we do that, who will instruct the clients to use an additional ":81" in the URL they are typing in their web-browsers? Clearly this is impractical approach, and is suitable for only one service / port redirection per physical host. Thus, appropriately named as Poor-Man's setup.

- The Rich-Man's setup: In this setup, we have a physical host, and, ability/freedom/money to purchase extra IP addresses, as we please. (Note: You normally have to give a valid justification to the service provider, in case you are requesting additional IPs). You may, or may not have additional network card on this host. We will assume that you don't have an additional network card on this physical host, because that is the case with 99% of web servers, provided by the server rental companies, such as serverbeach.com . Adding an additional network card on the server is either not possible (for technical reasons/limitations), or is costly. In such case, the service provider may ask you to setup additional IP address on the sub-interface of your existing network card, assuming you are using Linux. (You wouldn't be reading this text, if you were using anything other than Linux anyway!). At this point, the reader may have a valid question, which is; why didn't we setup our VMs on this host, on xenbr0, instead of virbr0? That would have been lot easier, and there was never a need to discuss private network in the first place, as all VMs would have the public IPs of the same subnet to which the physical server is connected to? (Ah! If life was that simple!) This has been discussed in the beginning / introduction of this paper. Once again, for the sake of re-cap, the reason is, that the server rental companies like ServerBeach.com does not provide this mode of configuring VMs. Their billing system is not capable to handle traffic coming from two different MAC address of the same physical server. Thus they want you to setup your VMs on the physical host's private network, setup a sub-interface of eth0, which will be eth0:0, and use the additional IP address they will give you on that sub-interface. This will make sure that no matter the traffic will arrive and leave on two different IP addresses, the MAC address will remain same, and thus no issues for billing.

With the explanation for two types of setups out of the way, lets configure the "Poor-Man's" setup first.

Scenario1: The Poor-Man's Setup, and The Solution

Scenario: One XEN/KVM host with one VM installed inside it, on private network (virbr0). VM is configured as a web server, running on ports 80 and 443.

- XEN host's eth0 IP : 192.168.1.201

- VM1 IP: 192.168.122.116

- Client / site visitor IP: 192.168.1.5

Objective: The VM should be able to access the internet, for any internal required facility, updates, etc. And, the VM should also be accessible from the outside, using a public IP. The VM's webserver is serving a single/simple web page, index.html, having the text/content: "Private VM1 inside a XEN host" in it. This is what we should be able to see in our browser.

Configuration: Most of the iptables rules are already configured by the libvirt service. Those rules will ensure that the VM is capable of accessing the internet. We only need to add two DNAT rules on the PREROUTING chain, for port 80 and 443, so the traffic will go to the VM.

# iptables -t nat -A PREROUTING -p tcp -i eth0 --destination-port 80 -j DNAT --to-destination 192.168.122.116 # iptables -t nat -A PREROUTING -p tcp -i eth0 --destination-port 443 -j DNAT --to-destination 192.168.122.116

Now we try to access the VM from outside, and see the following "connection refused" message:

[kamran@kworkhorse tmp]$ wget http://192.168.1.201 -O index.html; cat index.html --2011-02-08 21:31:16-- http://192.168.1.201/ Connecting to 192.168.1.201:80... failed: Connection refused. [kamran@kworkhorse tmp]$

This behaviour is because the default reject-all rules are blocking the traffic from eth0 to virbr0. Libvirt rules, only allow traffic between the physical host, and it's VMs.

To avoid this from happening, we "insert" the following two rules, in the beginning/start of the FORWARD chain. The first rule (below) will allow any NEW traffic arriving from the internet from eth0 interface and let it get forwarded to the VMs on the virbr0 interface. Since all the common services run on TCP, we have restricted the traffic type to tcp. This will protect the machines from any malicious UDP traffic. (The unwanted UDP traffic will get dropped automatically).

# iptables -I FORWARD -i eth0 -o virbr0 -p tcp -m state --state NEW -j ACCEPT # iptables -I FORWARD -i virbr0 -o eth0 -p tcp -m state --state RELATED,ESTABLISHED -j ACCEPT

After inserting the two rules shown above, we try to access the VM from outside, and see the following successful output:

[kamran@kworkhorse tmp]$ wget http://192.168.1.201 -O index.html; cat index.html --2011-02-08 21:45:54-- http://192.168.1.201/ Connecting to 192.168.1.201:80... connected. HTTP request sent, awaiting response... 200 OK Length: 37 [text/html] Saving to: “index.html” 100%[====================================================================================>] 37 --.-K/s in 0s 2011-02-08 21:45:54 (1.53 MB/s) - “index.html” saved [37/37] Private VM1 inside a XEN host [kamran@kworkhorse tmp]$

Success! As you can see, the index.html is displayed on the screen and it's contents read: "Private VM1 inside a XEN host" . We are able to successfully access a VM inside the XEN/KVM physical host. Now we need to automate the solution.

By executing a simple "iptables-save" command, we can capture all the rules in a file. And from there, we can edit the file as per our needs.

[root@xenhost ~]# iptables-save > /etc/sysconfig/iptables

Next, we edit this file and remove all such rules, which belong to libvirtd or xend. These are important to remove, otherwise, when libvirt service will start, it will add another set of the rules, doubling the size of the rules-set; which is useless anyway.

First, here is what the /etc/sysconfig/iptables file looks like, when we used the iptables-save command above.

[root@xenhost ~]# cat /etc/sysconfig/iptables *nat :PREROUTING ACCEPT [612:28358] :POSTROUTING ACCEPT [57:4258] :OUTPUT ACCEPT [55:4138] -A PREROUTING -i eth0 -j LOG -A PREROUTING -i eth0 -p tcp -m tcp --dport 80 -j DNAT --to-destination 192.168.122.116 -A PREROUTING -i eth0 -p tcp -m tcp --dport 443 -j DNAT --to-destination 192.168.122.116 -A POSTROUTING -s 192.168.122.0/255.255.255.0 -d ! 192.168.122.0/255.255.255.0 -p tcp -j MASQUERADE --to-ports 1024-65535 -A POSTROUTING -s 192.168.122.0/255.255.255.0 -d ! 192.168.122.0/255.255.255.0 -p udp -j MASQUERADE --to-ports 1024-65535 -A POSTROUTING -s 192.168.122.0/255.255.255.0 -d ! 192.168.122.0/255.255.255.0 -j MASQUERADE -A POSTROUTING -o eth0 -j LOG -A OUTPUT -o eth0 -j LOG COMMIT *filter :INPUT ACCEPT [3329:269273] :FORWARD ACCEPT [0:0] :OUTPUT ACCEPT [1909:224109] -A INPUT -i virbr0 -p udp -m udp --dport 53 -j ACCEPT -A INPUT -i virbr0 -p tcp -m tcp --dport 53 -j ACCEPT -A INPUT -i virbr0 -p udp -m udp --dport 67 -j ACCEPT -A INPUT -i virbr0 -p tcp -m tcp --dport 67 -j ACCEPT -A INPUT -i eth0 -j LOG -A INPUT -i eth0 -p tcp -m tcp --dport 25 -j REJECT --reject-with icmp-port-unreachable -A FORWARD -i eth0 -o virbr0 -p tcp -m state --state NEW -j ACCEPT -A FORWARD -i virbr0 -o eth0 -p tcp -m state --state RELATED,ESTABLISHED -j ACCEPT -A FORWARD -d 192.168.122.0/255.255.255.0 -o virbr0 -m state --state RELATED,ESTABLISHED -j ACCEPT -A FORWARD -s 192.168.122.0/255.255.255.0 -i virbr0 -j ACCEPT -A FORWARD -i virbr0 -o virbr0 -j ACCEPT -A FORWARD -o virbr0 -j REJECT --reject-with icmp-port-unreachable -A FORWARD -i virbr0 -j REJECT --reject-with icmp-port-unreachable -A FORWARD -j LOG -A OUTPUT -o eth0 -j LOG COMMIT [root@xenhost ~]#

Below is our desired version. Notice that we have also removed the NEW and RELATED/ESTABLISHED rules, we added, from the FORWARD chain. Because they will get pushed down anyway, below the reject-all rules. So no use here. We have also removed the LOG rules. We added the LOG rules for the sake of example only. Since their purpose is served, we have remove them.

[root@xenhost ~]# cat /etc/sysconfig/iptables *nat :PREROUTING ACCEPT [612:28358] :POSTROUTING ACCEPT [57:4258] :OUTPUT ACCEPT [55:4138] -A PREROUTING -i eth0 -p tcp -m tcp --dport 80 -j DNAT --to-destination 192.168.122.116 -A PREROUTING -i eth0 -p tcp -m tcp --dport 443 -j DNAT --to-destination 192.168.122.116 # The POSTROUTING line below will add a faster SNAT rule for your VM, assuming your VM is 192.168.122.116, # and your public IP address is 192.168.1.201. libvirtd will add additional MASQURADE rules, # and the rule below will be pushed down, rendering it useless. # You may want to add it to rc.local, or a script of your choice, which should run *after* xend is started. # -A POSTROUTING -s 192.168.122.116 -d ! 192.168.122.0/255.255.255.0 -j SNAT --to-source 192.168.1.201 COMMIT *filter :INPUT ACCEPT [3329:269273] :FORWARD ACCEPT [0:0] :OUTPUT ACCEPT [1909:224109] COMMIT [root@xenhost ~]#

As you would notice, in-essence we have just kept our traffic redirector rules in the PREROUTING chain. And rest of the file has been emptied. When the system boots next time, iptables service will load these two rules. Then, when the libvirt service runs, it will add it's default set of rules. The only two rules left now are the ones which move the traffic to/from the VMs across the FORWARD chain. We wish we could control that from the same /etc/sysconfig/iptables file. The only solution is to either add those rules in /etc/rc.local file, or create a small simple rc script (a service file), and configure it to start right after libvirtd service.

The readers might be thinking that we could add all our custom rules to rc.local, or proposed new service file. We recommend against that. The /etc/sysconfig/iptables file is still an excellent place to add more rules easily, in case there is a need to, especially for the INPUT chain. It is easy to add server protection rules to it (this file), instead of managing them at different places. Besides, out NEW and RELATED/ESTABLISHED rules are totally generic in nature, and you will probably never need to change them throughout the service lifetime of your physical host. So they can be kept in either rc.local, or a small service script. We will show both methods below.

Method #1: /etc/rc.local

[root@xenhost ~]# cat /etc/rc.local #!/bin/sh # This script will be executed *after* all the other init scripts. # You can put your own initialization stuff in here if you don't # want to do the full Sys V style init stuff. touch /var/lock/subsys/local iptables -I FORWARD -i eth0 -o virbr0 -p tcp -m state --state NEW -j ACCEPT iptables -I FORWARD -i virbr0 -o eth0 -p tcp -m state --state RELATED,ESTABLISHED -j ACCEPT [root@xenhost ~]#

Method #2: A small service file

[root@xenhost init.d]# cat /etc/init.d/post-libvirtd-iptables

#!/bin/sh

#

# post-libvirtd. Sets up additional iptables rules, after libvirt is done adding it's rules.

#

# chkconfig: 2345 99 02

# description: Inserts two iptables rules to the FORWARD chain on a XEN/KVM Hypervisor.

# Source function library.

. /etc/init.d/functions

start() {

iptables -I FORWARD -i eth0 -o virbr0 -p tcp -m state --state NEW -j ACCEPT

iptables -I FORWARD -i virbr0 -o eth0 -p tcp -m state --state RELATED,ESTABLISHED -j ACCEPT

logger "Two iptables rules added to the FORWARD chain"

}

stop() {

echo "post-libvirtd-iptables - Do nothing."

}

case "$1" in

start)

start

RETVAL=$?

;;

stop)

stop

RETVAL=$?

;;

esac

exit $RETVAL

[root@xenhost init.d]#

Now, make the script executable and add the service to init.

[root@xenhost init.d]# chmod +x /etc/init.d/post-libvirtd-iptables [root@xenhost init.d]# chkconfig --add post-libvirtd-iptables [root@xenhost init.d]# chkconfig --level 35 post-libvirtd-iptables on

Time to restart our XEN/KVM host. And see if it boots up to our expected result.

[root@xenhost init.d]# reboot

After the system boots up, start the VM, and check the iptables rule-set:

[root@xenhost ~]# iptables -L Chain INPUT (policy ACCEPT) target prot opt source destination ACCEPT udp -- anywhere anywhere udp dpt:domain ACCEPT tcp -- anywhere anywhere tcp dpt:domain ACCEPT udp -- anywhere anywhere udp dpt:bootps ACCEPT tcp -- anywhere anywhere tcp dpt:bootps Chain FORWARD (policy ACCEPT) target prot opt source destination ACCEPT tcp -- anywhere anywhere state RELATED,ESTABLISHED ACCEPT tcp -- anywhere anywhere state NEW ACCEPT all -- anywhere 192.168.122.0/24 state RELATED,ESTABLISHED ACCEPT all -- 192.168.122.0/24 anywhere ACCEPT all -- anywhere anywhere REJECT all -- anywhere anywhere reject-with icmp-port-unreachable REJECT all -- anywhere anywhere reject-with icmp-port-unreachable ACCEPT all -- anywhere anywhere PHYSDEV match --physdev-in vif1.0 Chain OUTPUT (policy ACCEPT) target prot opt source destination [root@xenhost ~]# [root@xenhost ~]# iptables -L -t nat Chain PREROUTING (policy ACCEPT) target prot opt source destination DNAT tcp -- anywhere anywhere tcp dpt:http to:192.168.122.116 DNAT tcp -- anywhere anywhere tcp dpt:https to:192.168.122.116 Chain POSTROUTING (policy ACCEPT) target prot opt source destination MASQUERADE tcp -- 192.168.122.0/24 !192.168.122.0/24 masq ports: 1024-65535 MASQUERADE udp -- 192.168.122.0/24 !192.168.122.0/24 masq ports: 1024-65535 MASQUERADE all -- 192.168.122.0/24 !192.168.122.0/24 Chain OUTPUT (policy ACCEPT) target prot opt source destination [root@xenhost ~]#

Congratulations! Our rules are setup exactly as we expected them to be. A virtual machine (VM1) was also started before collected the above output. Now, for the last time, we check if we are able to access the webpage of our webserver.

[kamran@kworkhorse tmp]$ wget http://192.168.1.201 -O index.html; cat index.html --2011-02-08 22:54:22-- http://192.168.1.201/ Connecting to 192.168.1.201:80... connected. HTTP request sent, awaiting response... 200 OK Length: 37 [text/html] Saving to: “index.html” 100%[====================================================================================>] 37 --.-K/s in 0s 2011-02-08 22:54:22 (1.06 MB/s) - “index.html” saved [37/37] Private VM1 inside a XEN host [kamran@kworkhorse tmp]$

Excellent! So our solution works perfectly!

Scenario 2: The Rich-Man's Setup, and The Solution

In this setup, we have multiple public IPs for a single physical host. The extra IPs are configured on sub-interfaces of eth0 of the physical host. This setup allows more freedom. That is, each public IP can be DNATed to an individual VM with a private IP. Thus, the limitation of ports is also lifted. Now, each IP can have it's own port 80, 22, 25, 110, etc. This is exactly what is required by small hosting providers, who have servers provided by server rental companies like ServerBeach.com .

Configuration: In this setup, we have two VMs running inside our XEN host. Both are web servers, serving different content. VM1 has private IP 192.168.122.116 and VM2 has the private IP 192.168.122.71. The physical host has three (so called, public) IPs as follows:

- eth0: 192.168.1.201 (XEN physical host)

- eth0:0 192.168.1.211 (VM1 public IP)

- eth0:1 192.168.1.212 (VM2 public IP)

Note: You can setup more sensible fixed IPs for your VMs. Our practice, and suggestion is to keep the last octet same in the public and private IPs. Such as 192.168.122.211 for VM1 and 192.168.122.212 for VM2.

Setting up a sub-interface is pretty easy.

[root@xenhost ~]# cat /etc/sysconfig/network-scripts/ifcfg-eth0\:0 DEVICE=eth0:0 BOOTPROTO=static ONBOOT=yes IPADDR=192.168.1.211 NETMASK=255.255.255.0 [root@xenhost ~]#

[root@xenhost ~]# cat /etc/sysconfig/network-scripts/ifcfg-eth0\:1 DEVICE=eth0:1 BOOTPROTO=static ONBOOT=yes IPADDR=192.168.1.212 NETMASK=255.255.255.0 [root@xenhost ~]#

Restart the network service:

[root@xenhost network-scripts]# service network restart

Set up the /etc/sysconfig/iptables file, as per our requirements:

[root@xenhost ~]# iptables-save *filter :INPUT ACCEPT [2480:206283] :FORWARD ACCEPT [0:0] :OUTPUT ACCEPT [1790:216317] COMMIT *nat :PREROUTING ACCEPT [363:47472] :POSTROUTING ACCEPT [114:9636] :OUTPUT ACCEPT [103:8976] -A PREROUTING -d 192.168.1.211 -i eth0 -p tcp -j DNAT --to-destination 192.168.122.116 -A PREROUTING -d 192.168.1.212 -i eth0 -p tcp -j DNAT --to-destination 192.168.122.71 # The two lines below will add faster SNAT rules to POSTROUTING. Since they will be pushed down, # by the libvirtd rules, you will need to add them in the rc.local or a script of your choice, # which should run after xend. # -A POSTROUTING -s 192.168.122.116 -d ! 192.168.122.0/255.255.255.0 -j SNAT --to-source 192.168.1.201 # -A POSTROUTING -s 192.168.122.71 -d ! 192.168.122.0/255.255.255.0 -j SNAT --to-source 192.168.1.211 COMMIT [root@xenhost ~]#

Note: You will notice the name of the interface as "eth0" in the PREROUTING commands above, whereas you might have been expecting eth0:0 and eth0:1, for the two VMs respectively. The problem is that iptables does not support sub-interface to be used as incoming or outgoing interface, as yet. Thus we have to use the name of the parent device, which is eth0 in our case. However, if you want, you can entirely remove "-i eth0" part from the PREROUTING commands above, and it will still work.

As you can see above, we are not using port forwarding this time. Instead we are DNATing traffic from puiblic IP to private IP, on one-to-one basis.

You would also notice, in-essence we have just kept our traffic redirector rules in the PREROUTING chain. And rest of the rules are deleted from this file. When the system boots next time, iptables service will load these two rules. Then, when the libvirt service runs, it will add it's default set of rules. The only two rules left now are the ones which move the traffic to/from the VMs across the FORWARD chain. We wish we could control that from the same /etc/sysconfig/iptables file. The only solution is to either add those rules in /etc/rc.local file, or create a small simple rc script (a service file), and configure it to start right after libvirtd/xend service.

The readers might be thinking that we could add all our custom rules to rc.local, or proposed new service file. We recommend against that. The /etc/sysconfig/iptables file is still an excellent place to add more rules easily, in case there is a need to. It is easy to add server protection rules to it, then managing them at different places. Besides, our NEW and RELATED/ESTABLISHED rules are totally generic in nature, and you will probably never need to change them throughout the service lifetime of your physical host. Same is the case with the optional SNAT rules. So they can be kept in either rc.local, or a small service script. We will show both methods below.

Method #1: /etc/rc.local

[root@xenhost ~]# cat /etc/rc.local #!/bin/sh # This script will be executed *after* all the other init scripts. # You can put your own initialization stuff in here if you don't # want to do the full Sys V style init stuff. touch /var/lock/subsys/local iptables -I FORWARD -i eth0 -o virbr0 -p tcp -m state --state NEW -j ACCEPT iptables -I FORWARD -i virbr0 -o eth0 -p tcp -m state --state RELATED,ESTABLISHED -j ACCEPT [root@xenhost ~]#

Method #2: A small service file

[root@xenhost init.d]# cat /etc/init.d/post-libvirtd-iptables

#!/bin/sh

#

# post-libvirtd. Sets up additional iptables rules, after libvirt is done adding it's rules.

#

# chkconfig: 2345 99 02

# description: Inserts two iptables rules to the FORWARD chain on a XEN/KVM Hypervisor.

# Source function library.

. /etc/init.d/functions

start() {

iptables -I FORWARD -i eth0 -o virbr0 -p tcp -m state --state NEW -j ACCEPT

iptables -I FORWARD -i virbr0 -o eth0 -p tcp -m state --state RELATED,ESTABLISHED -j ACCEPT

logger "Two iptables rules added to the FORWARD chain"

}

stop() {

echo "post-libvirtd-iptables - Do nothing."

}

case "$1" in

start)

start

RETVAL=$?

;;

stop)

stop

RETVAL=$?

;;

esac

exit $RETVAL

[root@xenhost init.d]#

[root@xenhost init.d]# chmod +x /etc/init.d/post-libvirtd-iptables [root@xenhost init.d]# chkconfig --add post-libvirtd-iptables [root@xenhost init.d]# chkconfig --level 35 post-libvirtd-iptables on

Time to restart our XEN/KVM host. And see if it boots up to our expected result.

[root@xenhost init.d]# reboot

After the system comes back up from the reboot, we manually start our VM and check the rule-set:

[root@xenhost network-scripts]# iptables -L Chain INPUT (policy ACCEPT) target prot opt source destination ACCEPT udp -- anywhere anywhere udp dpt:domain ACCEPT tcp -- anywhere anywhere tcp dpt:domain ACCEPT udp -- anywhere anywhere udp dpt:bootps ACCEPT tcp -- anywhere anywhere tcp dpt:bootps Chain FORWARD (policy ACCEPT) target prot opt source destination ACCEPT tcp -- anywhere anywhere state RELATED,ESTABLISHED ACCEPT tcp -- anywhere anywhere state NEW ACCEPT all -- anywhere 192.168.122.0/24 state RELATED,ESTABLISHED ACCEPT all -- 192.168.122.0/24 anywhere ACCEPT all -- anywhere anywhere REJECT all -- anywhere anywhere reject-with icmp-port-unreachable REJECT all -- anywhere anywhere reject-with icmp-port-unreachable ACCEPT all -- anywhere anywhere PHYSDEV match --physdev-in vif1.0 ACCEPT all -- anywhere anywhere PHYSDEV match --physdev-in vif2.0 Chain OUTPUT (policy ACCEPT) target prot opt source destination [root@xenhost network-scripts]# [root@xenhost network-scripts]# iptables -L -t nat Chain PREROUTING (policy ACCEPT) target prot opt source destination DNAT tcp -- anywhere 192.168.1.211 to:192.168.122.116 DNAT tcp -- anywhere 192.168.1.212 to:192.168.122.71 Chain POSTROUTING (policy ACCEPT) target prot opt source destination MASQUERADE tcp -- 192.168.122.0/24 !192.168.122.0/24 masq ports: 1024-65535 MASQUERADE udp -- 192.168.122.0/24 !192.168.122.0/24 masq ports: 1024-65535 MASQUERADE all -- 192.168.122.0/24 !192.168.122.0/24 Chain OUTPUT (policy ACCEPT) target prot opt source destination [root@xenhost network-scripts]#

Congratulations. The iptables rule seem to be set up correctly. Remember, you can optionally insert SNAT rules, above the MASQUERADE rules, for efficiency.

Now, we test if we can pull web pages from our two VMs, by using two different public IPs.

First, we try to access the physical host's IP. Since there is no web service on that IP, we should get a connection refused error.

[kamran@kworkhorse ~]$ wget http://192.168.1.201 -O index.html ; cat index.html --2011-02-09 00:51:55-- http://192.168.1.201/ Connecting to 192.168.1.201:80... failed: Connection refused. [kamran@kworkhorse ~]$

Good. Just as we expected. Now we access our VMs, one by one, using their individual public IPs.

[kamran@kworkhorse ~]$ wget http://192.168.1.211 -O index.html ; cat index.html --2011-02-09 00:51:59-- http://192.168.1.211/ Connecting to 192.168.1.211:80... connected. HTTP request sent, awaiting response... 200 OK Length: 37 [text/html] Saving to: “index.html” 100%[====================================================================================>] 37 --.-K/s in 0s 2011-02-09 00:51:59 (1.51 MB/s) - “index.html” saved [37/37] Private VM1 inside a XEN host [kamran@kworkhorse ~]$

Excellent! First VM is responding. Notice the web content is: "Private VM1 inside a XEN host". Now let's try to access the other VM too.

[kamran@kworkhorse ~]$ wget http://192.168.1.212 -O index.html ; cat index.html --2011-02-09 00:52:03-- http://192.168.1.212/ Connecting to 192.168.1.212:80... connected. HTTP request sent, awaiting response... 200 OK Length: 30 [text/html] Saving to: “index.html” 100%[====================================================================================>] 30 --.-K/s in 0s 2011-02-09 00:52:03 (816 KB/s) - “index.html” saved [30/30] Private VM2 inside a XEN host [kamran@kworkhorse ~]$

Excellent! The other virtual machine is responding too! Notice the web content: "Private VM2 inside a XEN host".

Since we have separate public IPs for these VMs, you can directly access any service running on these VMs, such as SSH, etc.

This concludes our work, on the main topic.

How to protect the individual VMs?

This is a common question asked. People misunderstand, and think that they have to fill the physical host's rules to protect the individual VMs. That is not the case. Once the traffic is successfully forwarded to a particular VM, it is the iptables rules (and other protection mechanisms) on the VM itself, which are going to protect it against malicious/unwanted traffic. You would configure any host firewall or protection rules on the VM, same as you would do on a physical host. You can use plain iptables rules, tcp-wrappers, or host firewalls, such as CSF, etc, on your VMs. This is totally separate from the iptables rules on the physical host.

Trivia

Probably the most interesting fact is, that none of these rules are required in the first place, if you just want to run a VM in the private network (virbr0) only! . That is right. If you flush all sorts of rules from your physical host, (assuming all chains have default policy set to ACCEPT), the VMs will still be able to communicate to the physical host and vice-versa. However, they will not be accessible from the Internet, as there are no PREROUTING rules to divert traffic to the VMs. Similarly, they will not be able to access the internet, as there would be no MASQUERADE/SNAT rules in the POSTROUTING chain.

You might be thinking, "why so many rules in the first place?" . The answer is multi-part:

- These rules (added by libvirtd and xend), become important, when the default FORWARD policy is set to DROP. In that case, it is necessary to make sure that the traffic between the VMs and the physical host does not get blocked/dropped.

- Both libvirtd and XEN try to be smart. Libvirtd simply pushes all your rules below it's own rules. Whereas XEN (xend) just adds the (physdev) rule at the end (or beginning) of current set of rules, for each virtual machine.

- The designers of XEN assumed two situations, especially for the FORWARD chain.

- 1) They assumes that the FORWARD chain on any physical host, may be configured with default policy as DROP, and ACCEPTing only specific type of traffic. So, the "-A" mechanism in the /etc/xen/scripts/vif-common.sh script adds a ACCEPT rule for the bridge port of each new VM, which is started/powered-on.

- 2) If it is not the case explained in (1), then there might be a possibility that the default chain policy is ACCEPT, having certain ACCEPT rules in the chain, and (most-probably) then DROPing or REJECTing all other traffic at the bottom of the chain. In such a case, XEN adding the rule at the bottom of the FORWARD ruleset will be useless. In that case, XEN provides a mechanism, to change "-A" to "-I" in the vif-common.sh script. In that way, the PHYSDEV rule will be "inserted" at the top/beginning of the FORWARD chain. And, the bridge interface of any VM starting up by XEN, will always be allowed to send/receive traffic.

References

- https://bugzilla.redhat.com/show_bug.cgi?id=227011

- http://lists.fedoraproject.org/pipermail/virt/2010-January/001792.html

- http://forums.gentoo.org/viewtopic-p-6209192.html?sid=1089acac70de96d68aa856d758d7cdfe

- http://wiki.libvirt.org/page/Networking

- http://libvirt.org/formatnetwork.html#examplesPrivate

Other readings:

- http://www.redhat.com/archives/libvir-list/2007-April/msg00033.html

- http://people.gnome.org/~markmc/virtual-networking.html

- http://www.standingonthebrink.com/index.php/ipv6-ipv4-and-arp-on-xen-for-vps/

- http://www.gossamer-threads.com/lists/xen/users/175478

- http://xen.1045712.n5.nabble.com/PATCH-vif-common-sh-prevent-physdev-match-using-physdev-out-in-the-OUTPUT-FORWARD-and-POSTROUTING-che-td3255945.html

- https://bugzilla.redhat.com/show_bug.cgi?id=512206

- http://xen.1045712.n5.nabble.com/Filtering-traffic-to-Xen-guest-machines-td2585414.html

- http://www.centos.org/modules/newbb/viewtopic.php?topic_id=14676

- http://www.shorewall.net/FAQ.htm

- http://wiki.libvirt.org/page/Networking#Fedora.2FRHEL_Bridging