Virtualization-XEN

From WBITT's Cooker!

Contents |

Xen

Xen study resources

- Book: The Book of Xen: http://nostarch.com/xen.htm

- Book: Running Xen: http://runningxen.com/

- Xen Architecture Overview: http://wiki.xensource.com/xenwiki/XenArchitecture

- Xen Networking: http://wiki.xensource.com/xenwiki/XenNetworking

- Xen Networking Examples: http://wiki.xensource.com/xenwiki/XenNetworkingExamples

- Xen Networking Suse: http://wiki.xensource.com/xenwiki/XenNetworkingSuse

- Xen Networking Use Case: http://wiki.xensource.com/xenwiki/XenNetworkingUsecase

- LibVirt Networking (Virtual Network) architecture: http://libvirt.org/archnetwork.html

- LibVirt Networking (Virtual Network): http://wiki.libvirt.org/page/Networking

- Xen 4 Release Note: http://wiki.xensource.com/xenwiki/Xen4.0

- Xen 3.0 User Manual: http://tx.downloads.xensource.com/downloads/docs/user/

- Xen Common Problems and Questions: http://wiki.xensource.com/xenwiki/XenCommonProblems

- Xen Best Practices: http://wiki.xensource.com/xenwiki/XenBestPractices

- Xen Commonly Asked Questions by Users on Xen Mailing lists: http://wiki.xensource.com/xenwiki/XenUsersQuestions

- Xen Howtos: http://wiki.xensource.com/xenwiki/HowTos

- Xen Tutorials (mostly videos): http://www.xen.org/support/tutorial.html

- Xen Projects (Management, Cloud, Security, Fault Tolerance, Standards, Real-Time, Misc ): http://www.xen.org/community/projects.html

- Xen Case Studies: http://wiki.xensource.com/xenwiki/Xen_Case_Studies

- Xen (3.2) Live CD to play with: http://wiki.xensource.com/xenwiki/LiveCD

Xen Architecture

- As mentioned earlier, Xen hyper-visor runs directly on machine's hardware, in place of the operating system. The OS is in-fact loaded as a module by GRUB.

- When GRUB boots, it loads the hyper-visor, "kernel-xen".

- The hyper-visor then creates initial Xen domain, Domain-0 (Dom-0 for short).

- Dom-0 has full / privileged access to the hardware and to the hyper-visor, through it's control interfaces.

- xend, the user-space service is started to support utilities, which in-turn can install and control other domains and manage the xen hyper-visor itself.

- It is critical, and thus included in Xen's design, to provide security to Dom-0. If that is compromised, the hyper-visor and the other virtual machines/domains can also be compromised on same machine.

- One Dom-U, cannot directly access another Dom-U. All user domains are accessed only by Domain-0.

- In RHEL 5.x the hyper-visor uses kernel-xen. The RHEL5.x user domains also use (their own, individual) kernel-xen to boot up.

- User domains running RHEL 4.5 and higher (4.x), use kernel-xenU as their boot kernel.

- RHEL 4 cannot be used as Dom-0.

- Fedora 4 to Fedora 8 can be used as Dom-0. Later versions of Fedora 9-12, cannot be used as Dom-0.

- All versions of Fedora can be used as Dom-U.

- On the contrary, fully-virtualized hardware virtual machines (user/guest domains), must use normal "kernel", instead of "kernel-xen".

The Privilege Rings architecture

- Security Rings, also known as privilege rings, or privilege levels, are a design feature of all the modern day processors. The lowest numbered ring has the highest privilege and the highest numbered ring has the lowest privilege. Normally four rings are used, numbered 0-3.

- In a non virtualized environment / case, the normal Linux Operating System's kernel runs in ring-0, where it has full access to all of the hardware. The user-space programs are run in ring-3, which has limited access to all hardware resources. For any access needed to the hardware, the user-space programs request to system programs in ring-0.

- Para-virtualization works using Ring-Compression. In this case, the hyper-visor itself runs on ring-0. The kernels of Dom-0 and Dom-Us of the PVMs are run in lower privileged rings, in the following manner.

- On 32-bit x86 machines, Dom-0 and Dom-U kernels run in ring-1; and segmentation is used to protect memory.

- On 64-bit architectures, segmentation is not supported. In such case, kernel-space and user-space for virtual domains must both run in ring-3. Paging and context switches are used to protect the hyper-visor, and also protect the kernel-address-space and user-spaces of virtual domains, from each other.

- Hardware-assisted Full Virtualization works differently. The new processor instructions (Intel VT-x and AMD-V) places the CPU is new execution modes, depending on situation.

- When executing instructions for a hardware-assisted virtual machine (HVM), the CPU switches to "non-privileged" or "non-root" or "guest" mode, in which the VM kernel can run in ring-0 and the userspace can run in ring-3.

- When an instruction arrives, which must be trapped by the hyper-visor, the CPU leaves this mode and returns to the normal "privileged" or "root" or "host" mode, in which, the privileged hyper-visor us running in ring-0.

- Each processor for each virtual machine, has a virtual machine control block/structure associated with it. This block is 4KB in size, also known as a page. This block/structure stores the information about the state of the processor in that particular virtual machine's "guest" mode.

Note that this can be used in parallel to para-virtualization. This means that some virtual machines may be setup to run as para-virtualized, and at the same time, on the same physical host, some virtual machines may use the virtualization extensions (Intel VT-x/ADM-V). This is of-course only possible on a physical host, which has these extensions available and enabled in the CPU.

Xen Networking Concepts / Architecture

This is the most important topic after the basic Xen Architecture concepts. Disks / Virtual Block Devices will be following it.

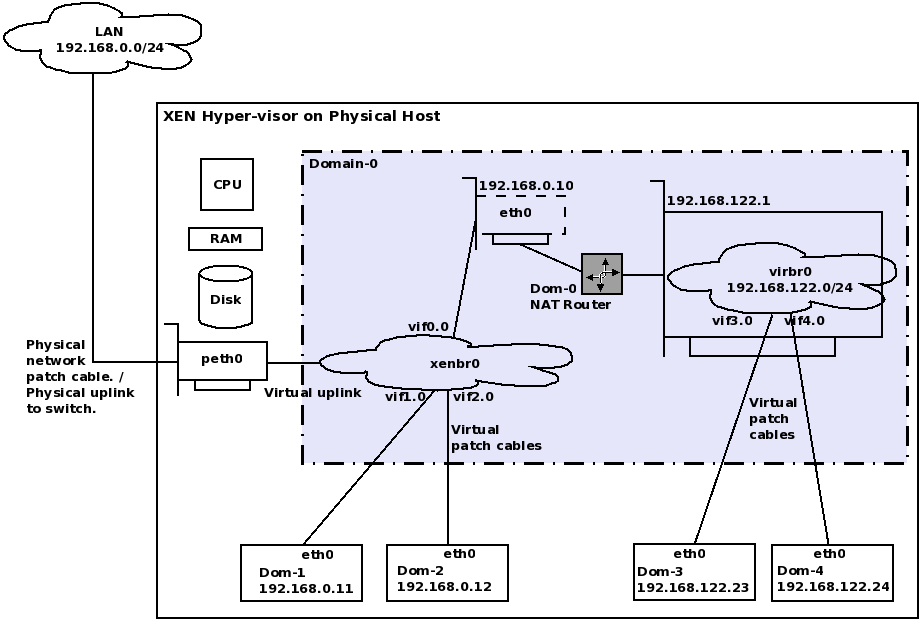

Xen provides two types of network connectivity to the guest OS / Dom-Us.

- Shared Physical Device (xenbr0)

- Virtual Network (virbr0)

- Shared Physical Device (xenbr0)

- When a VM needs to have the IP of the same network, to which Xen physical host is connected to, it needs to be "bridged" to the physical network. "xenbr0" is the standard bridge or a virtual switch , which connects VMs to the same network, where the physical host itself is connected.

- Xenbr0 never has an IP assigned to it, because it is just a forwarding switch/bridge.

- This kind of connectivity is used when the VMs have publicly accessible services running on them, such as an email server, a web server, etc.

- This is a much easier mode of networking / network connections to the VMs.

- This was the default method of networking VMs in RHEL 5.0

- Virtual Network (virbr0)

- It is important to note that Virtual Network is not native to Xen. In other words, it is not provided by Xen. In-fact the libvirtd daemon present in RedHat based distributions start a separate (private) bridge virbr0. This bridge is setup for NAT by deafult and should be considered part of libvirtd, rather than Xen, as explained earlier. However, it does function as Xen's "network-nat" implementation. That is, Dom-0 runs as DNSMASQ service pm virbr0, which NATs the packets coming to/from DomU.

- http://wiki.libvirt.org/page/Networking

- http://libvirt.org/archnetwork.html

- You "can" use just Xen's networking scripts/methods, without using libvirtd.

- When a VM does not have to be on the same network as of the physical host itself, it can be connected to another type of bridge / virtual switch, which is private to the physical host only. This is named "virbr0" in most RedHat based Linux distributions, as explained above.

- In this case, the Xen physical host is assigned a default IP of 192.168.122.1 , and is connected to this private switch . All VMs created / configured to connect to this switch get the IP of the same private subnet 192.168.122.0/24 . The physical host's 192.168.122.1 interface works as a gateway for these VM's traffic to go out of the physical host, and allow them to communicate with the outside world.

- This communication is done through NAT. The physical host / Dom-0 acts as NAT router, and also as a DHCP and DNS server for the virtual machines connected to virbr0. A special service running on physical host/ Dom-0 , named "dnsmasq" does this.

- It should be noted that the DHCP service running on physical host / Dom-0 does not create any conflict with any other DHCP server on the network , to which the public interface of the physical host is connected. Thus it is safe.

- This mechanism is mostly used in test environments, as it allows each developer / administrator to have his own virtual machines, in a sandbox / isolated environment, within his PC / laptop, etc.

- Since the machines do not obtain the IP from the public network of the physical host, the public IPs are not wasted.

- Another advantage is that even if your physical host is not connected to any network, the virbr0 still has an IP (192.168.122.1), thus all virtual machines and the physical host are always connected to each other. This is not possible in Shared Physical Device mode (xenbr0), because if the network cable is unplugged from the physical host and it does not have an IP of it's own, the virtual machines, also don't have an IP of their own. (Unless if they are configured with static IPs, of-course).

- Some service providers do not provide the bridging functionality (xenbr0). ServerBeach is one of them. Thus I had to use virbr0 and twist the firewall rules to make this virtual machine accessible from outside. (This example is not covered in this text. I may explain it in the CBT.)

- This is the default network connectivity method for VMs in RHEL 5.1 and onwards.

- Each Xen guest domain / Dom-U, can have up to three (3) virtual NICs assigned to it. The physical interface on the physical host / Dom-0 is renamed to "peth0" (Physical eth0) . This becomes the "uplink" from this Xen physical host, to the physical LAN switch. In fact a virtual network cable is connected to from this peth0 to the virtual bridge created by Xen.

- Virtual Network Interfaces with the naming scheme of vifD.N are created in Dom-0, as network ports for the bridges, and are connected to the virtual network interfaces (eth0) of each virtual machine. "D" is the "Domain-ID" of the Dom-U and "N" is the "NIC number" in the Dom-U. It should be noted that vifD.N never has an IP assigned to it. The IP is assigned to the actual virtual network interface in the domain it is pointing to.

- Note that both "peth0" and "vifD.N" have the MAC address of "FE:FF:FF:FF:FF:FF". This is because they are (so-called) ports connecting to outer world (in case of peth0), and internal virtual network (in case of vifD.N). This indicates that the actual MAC address of the back-end virtual network interface card of the domain will take precedence.

- Also note that "virbr0" will always have a MAC address of "00:00:00:00:00:00". Remember this is a NAT router component, and all MAC addresses are stripped off at the router level / layer-3. Therefore this indicates that the virtual machines can have any MAC address, but when they go out of this interface, they are replaced with the MAC address of the eth0 of Dom-0. (This is because the packet will be NATed).

- All virtual NICs of the VMs have MAC address of the vendor code "00:16:3E".

- Dom-0's physical interface was renamed to peth0 in the point explained above. Therefore for Dom-0 to communicate to th e world, it needs a network interface. A virtual network interface "eth0" is assigned to it. This eth0 of Dom-0 is then assigned appropriate IP. This eth0 of Dom-0 is connected to the virtual bridge already created by Xen. The corresponding virtual interface on Dom-0 for this one is vif0.0 . See the computer output a little below to understand this.

- For example, there is a Dom-U named "Fedora-12" with domain-id of "3", and that Dom-U has only one virtual NIC, "eth0", then there will be an interface defined in Dom-0, by the name vif3.0 , which means that a virtual network cable from the Dom-0's bridge is connected to the eth0 of domain "Fedora-12". In the computer output below, this vif (vif3.0) is not shown, because that is a freshly installed Xen server, which doesn't have any VMs created in it at the moment.

Here is the output of ifconfig command, from a freshly installed Xen physical host. There are a few concepts, which you must master, before you move on.

[root@xenhost ~]# ifconfig

eth0 Link encap:Ethernet HWaddr 00:13:72:81:3A:3D

inet addr:192.168.1.20 Bcast:192.168.1.255 Mask:255.255.255.0

inet6 addr: fe80::213:72ff:fe81:3a3d/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:42 errors:0 dropped:0 overruns:0 frame:0

TX packets:37 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:5919 (5.7 KiB) TX bytes:5150 (5.0 KiB)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:16436 Metric:1

RX packets:8 errors:0 dropped:0 overruns:0 frame:0

TX packets:8 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:588 (588.0 b) TX bytes:588 (588.0 b)

peth0 Link encap:Ethernet HWaddr FE:FF:FF:FF:FF:FF

inet6 addr: fe80::fcff:ffff:feff:ffff/64 Scope:Link

UP BROADCAST RUNNING NOARP MTU:1500 Metric:1

RX packets:32 errors:0 dropped:0 overruns:0 frame:0

TX packets:32 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:5147 (5.0 KiB) TX bytes:4762 (4.6 KiB)

Interrupt:16 Memory:fe8f0000-fe900000

vif0.0 Link encap:Ethernet HWaddr FE:FF:FF:FF:FF:FF

inet6 addr: fe80::fcff:ffff:feff:ffff/64 Scope:Link

UP BROADCAST RUNNING NOARP MTU:1500 Metric:1

RX packets:64 errors:0 dropped:0 overruns:0 frame:0

TX packets:62 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:9412 (9.1 KiB) TX bytes:7239 (7.0 KiB)

virbr0 Link encap:Ethernet HWaddr 00:00:00:00:00:00

inet addr:192.168.122.1 Bcast:192.168.122.255 Mask:255.255.255.0

inet6 addr: fe80::200:ff:fe00:0/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:6 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:0 (0.0 b) TX bytes:468 (468.0 b)

xenbr0 Link encap:Ethernet HWaddr FE:FF:FF:FF:FF:FF

UP BROADCAST RUNNING NOARP MTU:1500 Metric:1

RX packets:11 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:578 (578.0 b) TX bytes:0 (0.0 b)

[root@xenhost ~]#

And here is the output of ifconfig command from a KVM physical host. This is being show here, just to show the difference in networking model between Xen and KVM.

[root@kworkbee ~]# ifconfig

eth0 Link encap:Ethernet HWaddr 00:1C:23:3F:B8:80

inet addr:192.168.1.2 Bcast:192.168.1.255 Mask:255.255.255.0

inet6 addr: fe80::21c:23ff:fe3f:b880/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:750557 errors:0 dropped:0 overruns:0 frame:0

TX packets:503960 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:857738915 (818.0 MiB) TX bytes:55480683 (52.9 MiB)

Interrupt:17

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:16436 Metric:1

RX packets:326 errors:0 dropped:0 overruns:0 frame:0

TX packets:326 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:11701 (11.4 KiB) TX bytes:11701 (11.4 KiB)

virbr0 Link encap:Ethernet HWaddr 8A:E3:7A:EA:A6:A3

inet addr:192.168.122.1 Bcast:192.168.122.255 Mask:255.255.255.0

inet6 addr: fe80::88e3:7aff:feea:a6a3/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:144 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:0 (0.0 b) TX bytes:17135 (16.7 KiB)

[root@kworkbee ~]#

One of the most important piece of text related to Xen Networking is at http://wiki.xensource.com/xenwiki/XenNetworking . This article has diagrams to explain Xen Networking concepts. Reproducing some of the text here:

Xen creates, by default, seven pair of "connected virtual ethernet interfaces" for use by dom0. Think of them as two ethernet interfaces connected by an internal crossover ethernet cable. veth0 is connected to vif0.0, veth1 is connected to vif0.1, etc, up to veth7 -> vif0.7. You can use them by configuring IP and MAC addresses on the veth# end, then attaching the vif0.# end to a bridge.

Every time you create a running domU instance, it is assigned a new domain id number. You don't get to pick the number, sorry. The first domU will be id #1. The second one started will be #2, even if #1 isn't running anymore.

For each new domU, Xen creates a new pair of "connected virtual ethernet interfaces", with one end in domU and the other in dom0. For linux domU's, the device name it sees is named eth0. The other end of that virtual ethernet interface pair exists within dom0 as interface vif<id#>.0. For example, domU #5's eth0 is attached to vif5.0. If you create multiple network interfaces for a domU, it's ends will be eth0, eth1, etc, whereas the dom0 end will be vif<id#>.0, vif<id#>.1, etc.

When a domU is shutdown, the virtual ethernet interfaces for it are deleted.

There is another excellent explanation of some of the Xen Networking Concepts at http://www.novell.com/communities/print/node/4094 . I am reproducing some part of it below. You should still read this document in full.

The following outlines what happens when the default Xen networking script runs on single NIC system:

- the script creates a new bridge named xenbr0

- "real" ethernet interface eth0 is brought down

- the IP and MAC addresses of eth0 are copied to virtual network interface veth0

- real interface eth0 is renamed peth0

- virtual interface veth0 is renamed eth0

- peth0 and vif0.0 are attached to bridge xenbr0 as bridge ports

- the bridge, peth0, eth0 and vif0.0 are brought up

The process works wonderfully if there is only one network device present on the system. When multiple NICs are present, this process can be confused or limitations can be encountered.

In this process, there is a couple of things to remember:

- pethX is the physical device, but it has no MAC or IP address

- xenbrX is the bridge between the internal Xen virtual network and the outside network, it does not have a MAC or IP address

- vethX is a usuable end-point by either Dom0 or DomU and may or may not have an IP or MAC address

- vifX.X is a floating end-point for vethX's that is connected to the bridge

- ethX is a renamed vethX that is connected to xenbrX via vifX.X and has an IP and MAC address

netloop

In the process of bringing up the networking, veth and vif pairs are brought up. For each veth device, there is a coresponding vif device. The veth devices are given to the DomU's, while the corresponding vif device is attached to the bridge. By default, seven of the veth/vif pairs are brought up. Each physical device consumes a veth/vif pair, thereby reducing the number of veth/vifs available for DomU's.

When a new DomU is started, a free veth/vif pair is used. The vif device is given to the DomU and is presented within DomU as ethX. (note: the veth/vif bridge is loosely like an ethernet cable. The veth end is given to either Dom0 or DomU and the vif end is attached ot the bridge)

For most installations, the idea of having seven virtual machines run at the same time is somewhat difficult (though not impossible). However, for each NIC card there has to be bridge, peth, eth and vif device. Since eth and vif devices are pseudo devices, the number of netloops is decremented for each physical NIC beyond the assume single NIC.

* With one NIC, 7 veth/vif pairs are present * Two NICs will reduce the veth/vif pairs available to 5 * Two NICs bonded will reduce the veth/vif available to 4 * Three NICs will reduce the veth/vifs available to 3 * Three NICs bonded presented as a single bond leaves 0 veth/vifs available * Four NICs will result in a deficit of -1 veth/vifs * Four NICs bonded into one bond results in a deficit of -3 veth/vifs * Four NICs bonded into two bonds results in a deficit of -4 veth/vifs

Where most people run into problems is with bonding. The network-multinet script enables the use of bonding. It is easy to see where one could run into trouble with multiple NICs.

The solution is to increase the number of netloop devices, thereby increasing the number of veth/vif pairs available for use.

- In /etc/modprobe.d create and open a file for editing called "netloop"

- Add the following line to it

options netloop nloopbacks=32

- Save the file

- Reboot to activate the setting

It is recommended to increase the number of netloops in any situation where multiple NICs are present. When a deficit of netloops exist, sparadioc and odd behavior have been observed including completely broken networking configuration.

Check-list for performing an actual Xen installation on Physical Host

Alright, after necessary theory covered in the text before this, we will now go into the actual fun part. Which is installation of Xen on a machine. Here are things to check before you start.

- Make sure that PAE is supported by your processor(s) at a minimum, which is needed by Xen, if para-virtulization is needed.

- Make sure you have enough processors / processing power for both Dom-0 and Dom-U to function properly.

- If you want to use Hardware-assisted full virtualization, make sure that Intel VT-x/AMD-V extensions are available in your processor(s).

- At least 512 MB RAM for each domain, including Dom-0 and Dom-U. It can be brought down to 384 MB , or even 256 MB in some cases, depending on the software configurations you select.

- Enough space in active partition of the OS, for each VM, if you want to use one large file as visrtual disks for your virtual machines. Xen creates virtual disks in the location: /var/lib/xen/images .

- You can also create virtual disks on Logical Volumes and snap-shots, as well as on a SAN, normally ISCSI based IP-SAN.

- Enough free disk area, to create raw partitions, which can be used by virtual machines, as their virtual disks. In this case, free space in active linux partitions is irrelevant.

- Install Linux as you would normally. (We are only focusing on RHEL, CENTOS, Fedora in this text, though there are other distributions out there too.)

- You will need an X interface on this machine, if you want to use virt-manager, which is the GUI interface for libvirt, which in-turn controls Xen, KVM and QEMU.

- You may want to select the package group named "Virtualization" during install process.

- If you did not select the package-group "Virtualization" during install process, install it now.

- Make sure that you have kernel-xen , xen , libvirt and virt-manager are installed.

- Make sure that your default boot kernel in GRUB is the one with "kernel-xen" in it. You can also set "DEFAULTKERNEL=kernel-xen in /etc/sysconfig/kernel file.

- You may or may not want to use SELINUX. If you are not comfortable with it, disable it. Xen and KVM are SELINUX aware, and work properly (with more security) when used (properly) on top of SELINUX enabled Linux OS.

- Lastly, make sure that xend and libvirtd services are set to ON on boot up.

chkconfig --level 35 xend on chkconfig --level 35 libvirtd on

- It is normally quite helpful to disable un-necessary services, depending on your requirements. I normally disable sendmail, cups, bluetooth, etc, on my servers.

- It is important to know that while creating para-virtual machines, you cannot use the ISO image of your Linux distribution, stored on physical host, trying to use it as install media for the VMs being created. When you need to create PV machines, you will need an exploded version of the install CD or RHEL/CENTOS 4.5 or higher, accessible to this physical host. Normally this is done by storing the exploded tree of the installation CD/DVD on the hard disk of the physical host and making it available through NFS, HTTP or FTP. Therefore you must cater for this additional disk space requirement, when you are installing base OS on the physical host.

Performance issues with windows HVMs (windows HVM consumes a lot of CPU according to xm top)

After installing windows XP as an HVM, I saw that my windows VM was consuming a lot of XEN host CPU. It was staying at 98% CPU utilization!!! When I checked windows XP's task manager, I saw the XP's own CPU graphs to be idling around 20%-30%. This was confusing. After doing some research, it got cleared that the default HVM settings in XEN (and KVM ??) are setup be more to be on the fail-safe side, compared to the performance side. That means that the default XEN HVM config file has ACPI and APIC disabled. The following post helped clear the issue.

Interestingly, shutting down the XP VM, and changing the ACPI and APIC paramters inside the XEN config file for my winxp, did not help. The machine booted into the same state of using 98%+ of XEN host CPU. Checked the device manager in windows VM, and found out that the CPU of this machine is still MPS Processor. Therefore, I went through the eXtra Pain of installing XP again, which is one+ hour task. This time, what I did was, deleted the winxp VM's disk file, retained the config file, winxp, (of-course I made a backup copy). Then edited the winxp config file and changed ACPI and APIC both to enabled. Started the OS installation. This time, during installation, the CPU utilization was around 50% on average, and when it had finally installed and displayed that famous desktop, I noticed that the CPU utilization of the XEN host has dropped down to 01%-03% !!! Wow!!! Good to go. I noticed that when I shutdown this VM, using windows "Start->Shutdown", the machine stopped and shutdown itself properly. Before enabling these parameters/directives, when I used to shutdown the winxp VM, I used to get "It is not safe to shutdown your machine" screen. But, the CPU graph on XEN host used to remain on 99% of usage, until I "force shutdown" the winxp VM. This was solved by enabling ACPI and APIC both. I have shown this in a video on Virtualization with XEN series (video title ??) . The new processor detected in the new winxp VM installation is ACPI enabled processor. (screenshot ??)

(todo: update both paragraphs above with proper screenshots. )

About ACPI and APIC from wikipedia (only extracts):

ACPI (Advanced Configuration and Power Interface)

ACPI aims to consolidate and improve upon existing power and configuration standards for hardware devices. It provides a transition from existing standards to entirely ACPI-compliant hardware, with some ACPI operating systems already removing support for legacy hardware. With the intention of replacing Advanced Power Management (APM), the MultiProcessor Specification (MPS) and the Plug and Play (PnP) BIOS Specification, the standard brings power management into operating system control (OSPM), as opposed to the previous BIOS central system, which relied on platform-specific firmware to determine power management and configuration policy.

APIC (Advanced Programmable Interrupt Controller)

In computing, an Advanced Programmable Interrupt Controller (APIC) is a more complex Programmable Interrupt Controller (PIC) than Intel's original types such as the 8259A. APIC devices permit more complex priority schemata, and Advanced IRQ (Interrupt Request) management.

One of the best known APIC architectures, the Intel APIC Architecture, has largely replaced the original 8259A PIC in newer x86 computers, starting with SMP systems when it replaced proprietary SMP solutions and on pretty much all PC compatibles since around late 2000 when Microsoft began encouraging PC vendors to enable it on uniprocessor systems and even made it a requirement of PC 2001 to enable it on desktop systems.

There are two components in the Intel APIC system, the Local APIC (LAPIC) and the I/O APIC. There is one LAPIC in each CPU in the system. There is typically one I/O APIC for each peripheral bus in the system. In original system designs, LAPICs and I/O APICs were connected by a dedicated APIC bus. Newer systems use the system bus for communication between all APIC components.

Operating System Issues with APIC

It can be a cause of system failure, as some versions of some operating systems do not support it properly. If this is the case, disabling I/O APIC may cure the problem. This is done in the following manner:

* For Linux, 'noapic nolapic' kernel parameters

* For FreeBSD, the 'hint.apic.0.disabled' kernel environment variable

* For NetBSD, using userconf ("boot -c" from the boot prompt) command "disable ioapic"

In NetBSD with nforce chipsets from Nvidia, having IOAPIC support enabled in the kernel can cause "nfe0: watchdog timeout" errors.

In Linux, problems with I/O APIC are one of several causes of error messages concerning "spurious 8259A interrupt: IRQ7.". It is also possible that I/O APIC causes problems with network interfaces based on via-rhine driver, causing a transmission time out. Uniprocessor kernels with APIC enabled can cause spurious interrupts to be generated.

XEN Para Virtual (PV) Drivers for windows

Important links / help sites:

- http://www.pvwindrivers.org/win2k3.html

- http://wiki.xensource.com/xenwiki/XenWindowsGplPv

- http://docs.vmd.citrix.com/XenServer/4.0.1/guest/ch03s02.html

- http://old.nabble.com/Paravirtual-Windows-Driver-Binaries--td17343879.html

- http://southbrain.com/south/2009/08/xen-drivers-for-windows-2003-m.html

- http://www.jolokianetworks.com/70Knowledge/Virtualization/Open_Source_Windows_Paravirtualization_Drivers_for_Xen

- http://www.meadowcourt.org/downloads/

- http://www.virtuatopia.com/index.php/Installing_and_Running_Windows_XP_or_Vista_as_a_Xen_HVM_domainU_Guest

- http://markmail.org/thread/abwgslqw6n66vk52#query:+page:1+mid:wm7n3sw6wbgybvx5+state:results

- http://markmail.org/thread/jqg5ndxvampmojkw#query:+page:1+mid:jqg5ndxvampmojkw+state:results

- http://lists.xensource.com/archives/html/xen-users/2006-06/msg00599.html